If you have taken part in any online discussions about radar detectors, then you know that manufacturers, retailers and so-called experts are extremely passionate about the brands they support. Countless tests, both scientific and anecdotal, extol the features, sensitivity and false-alarm rejection performance of a half-dozen key brands. Each test brings with it another heated debate about test criteria, conditions, software updates and the testers’ purported and, in some cases, stated biases. As an outsider who has never used a radar detector (they are illegal here), it’s fun to watch everyone argue. Each time it happens, I think to myself, why don’t car audio manufacturers, retailers and enthusiasts want similar comparisons of source units, amplifiers, speakers and processors?

The Good Old Days of Car Audio Magazines

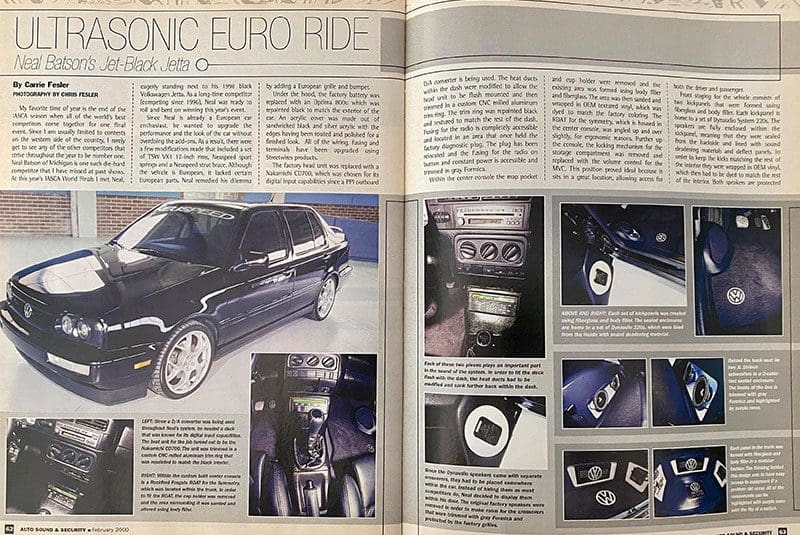

Decades ago, the car audio industry had four main magazines: Car Audio and Electronics, Car Stereo Review, Auto Sound & Security, and Car Sound. Most of us had subscriptions to all four publications and waited anxiously each month to see cool new builds, read technical articles and check out product test reports and comparisons.

For audio components, comparisons are easy. Testing an amplifier under controlled conditions isn’t difficult. Stable supply voltage and load impedances, combined with test data from hardware like the Audio Precision System One, made comparing power production, noise and distortion characteristics easy.

What were the drawbacks of comparison tests? Well, someone has to win and someone has to lose. For the winner, they were pretty much guaranteed increased sales. For the products that didn’t fare as well in the comparisons, retailers would be forced to focus on the specific features that or positive comments, and avoid areas where other products performed better.

Now that all of the North American car audio magazines are gone, all we have left are blog posts and YouTube videos. Nobody is making comparisons, and very few are still reviewing products with any semblance of technical measurement. Car & HiFi magazine from Germany is still producing great product reviews. Frankly, I miss that.

Why Do Measurements Matter?

In the ’80s, ’90s and early 2000s, car audio enthusiasts would spend countless hours comparing the specifications of the products they wanted to buy. Amp distortion, efficiency and power ratings, source unit tuner sensitivity and selectivity, and pre-amp voltage and output impedance all mattered to consumers. They may not have understood the absolute value of each number, but they knew what was mediocre, good or great.

Armed with enough information, you could get a very good idea of the quality of a product. Consumers would gravitate toward those that performed well, and as a result, their audio systems would sound better for it.

What happened to this philosophy? Why don’t numbers seem to matter? SPL enthusiasts eat up YouTube power production test videos like they are candy. Sadly, those videos don’t do anything to quantify the quality of the products being tested. Sound quality enthusiasts have very little tangible information available to compare source units, processors, amplifiers and, worst of all, speakers and subwoofers.

Who among you has seen a distortion graph for a speaker? They are relatively easy to create, and they can tell you a lot about the characteristics of a driver and its suitability for a specific application. Even high-end home audio magazines like Stereophile excel at electronic measurements but fall short on speaker evaluation. Their speaker reviews include frequency response, directivity, spectral decay (which are quite useful) and impedance graphs. Sadly, no distortion graphs are created.

Speaker distortion graphs can highlight non-linearities in suspension design and motor design and resonances in the cone, dust cap and surround. All of these characteristics add unwanted information to the audio signal and detract from the clarity of the listening experience.